Since 2018, the consortium mlcommons Running a kind of Olympics for AI training. The competition, called MLperf, involves a set of tasks to train specific AI models on a predefined dataset with a certain accuracy. Essentially, these tasks, called benchmarks, test how well a hardware and low-level software configuration is set up to train a particular AI model.

Twice a year, companies put together their submissions – typically, clusters of CPUs and GPUs and the software optimized for them – and compete to see whose submission can train the model the fastest.

There is no doubt that since MLPerf’s inception, the state-of-the-art hardware for AI training has improved dramatically. In the last few years, NVIDIA Is Issued Four New generations GPUs that have since become industry standards (the latest, Nvidia’s Blackwell GPUs, is not yet standard but growing in popularity). Companies competing in MLPerf are also using large clusters of GPUs to tackle training tasks.

However, the MLPerf benchmarks have also become tougher. And this increased stringency is by design – benchmarks are trying to keep pace with the industry, say. david cantorHead of MLperf. “The benchmarks are meant to be representative,” he says.

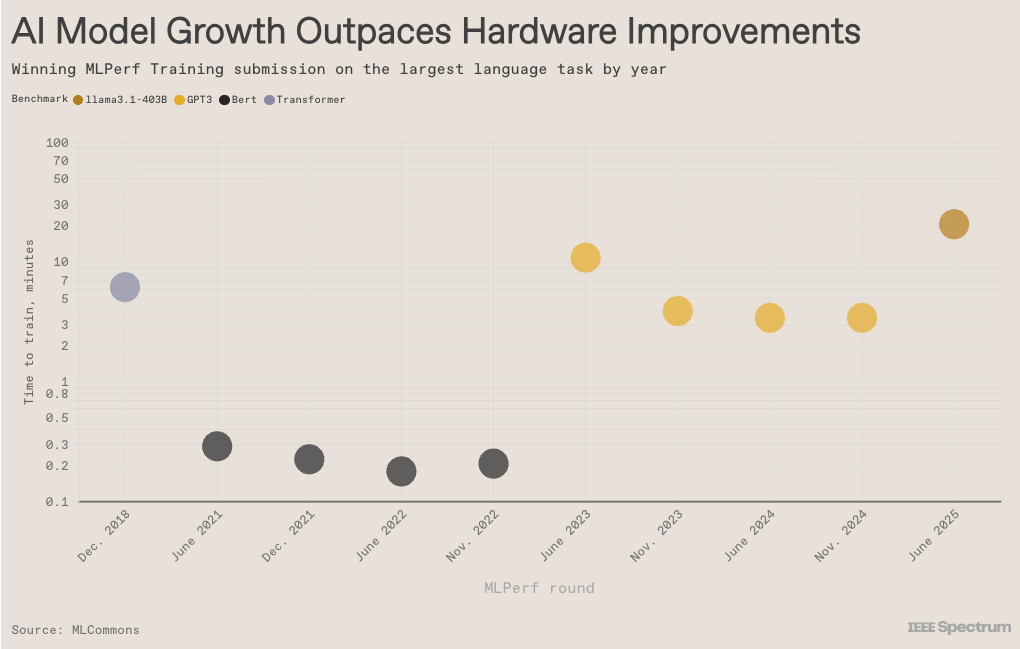

Interestingly, the data shows that large language models and their predecessors are growing in size faster than hardware. So every time a new benchmark is introduced, the fastest training time gets longer. Then, hardware improvements gradually reduce the execution time, only to be failed again by the next benchmark. Then the cycle repeats itself.

From articles on your site

Related articles on the web