Chatgpt O3 API is now cheaper for developers, and has no effect on performance.

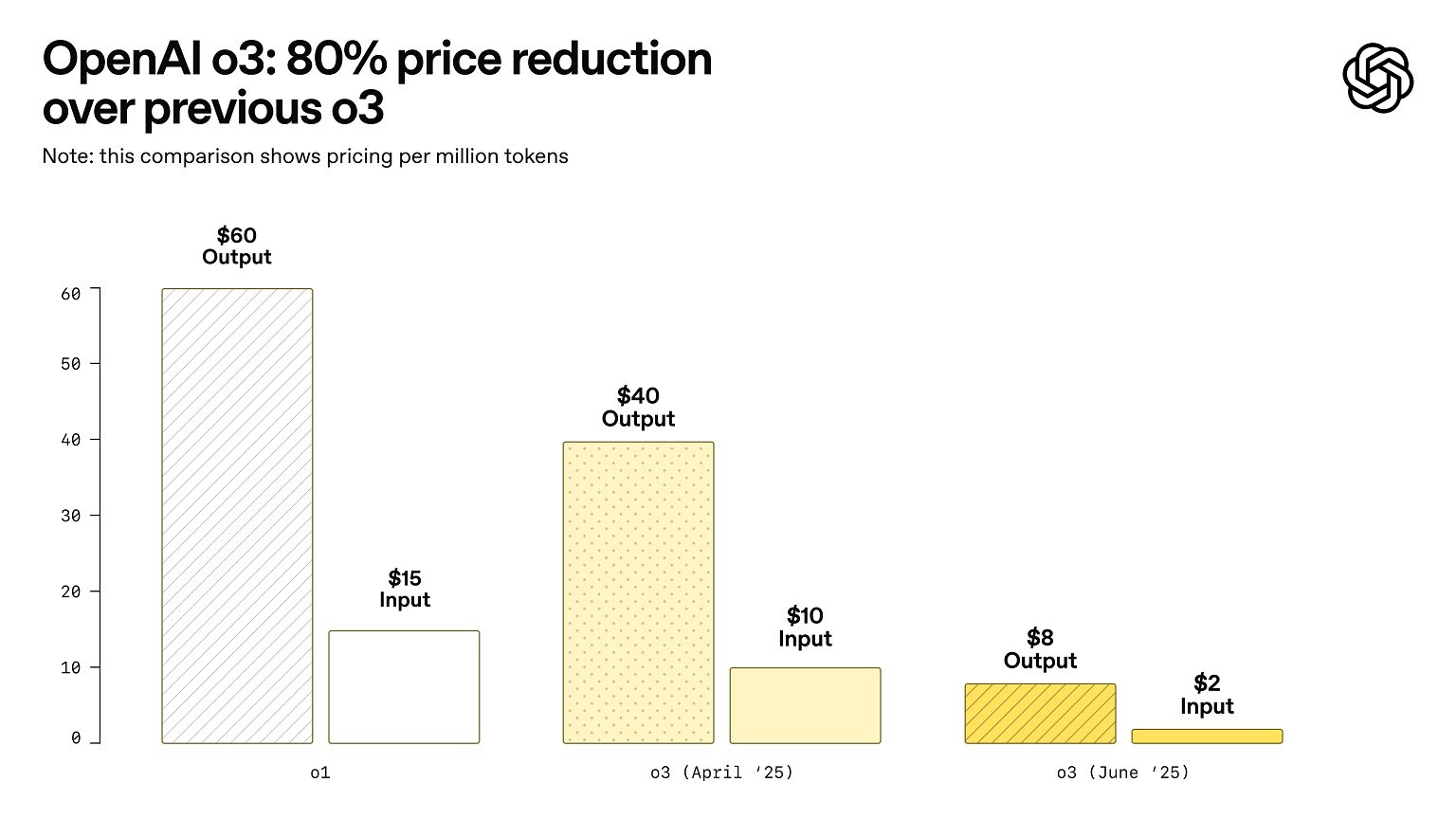

On Wednesday, Openai announced that it was cutting the price of its best logic model, O3 by 80%.

This means that the input price of O3 is now $ 2 per million tokens, while the output price has come down to $ 8 per million tokens.

“We adapted our estimate stack that serves O3. The same accurate model – just cheaper,” Openai noted In a post on X.

While regular users usually do not use the Chatgpt model through API, the price drops make very cheap tools on API, such as cursor and windsurf.

One in Post On X, the Independent Benchmark Community Arch Award confirmed that the performance of the O3-2025-04-16 model did not change after the price reduction.

“We compared the re -results with the original results and did not see any difference in the performance,” the company said.

This confirms that Openai did not swap the O3 model to reduce the price. Instead, the company really adapted the conclusions stacks that strengthened the model.

In addition, Openai rolled out the O3-PRO model in API, which uses more calculations to give better results.