Anthropic’s cloud code large language model has been abused by danger actors who used it in data extraction campaigns and to develop ransomware packages.

The company says its equipment has also been used in fraud North Korean IT activists schemes and for infectious interview campaigns, in Chinese APT campaigns, and to make malware with advanced theft capabilities by a Russian-moving developer.

AI-made ransomware

In another example, a UK-based danger actor tracked as ‘GTG-5004’, a Rainmary-A-A-Service (RAAS) operation to develop and commercialize the operation.

The AI utility helped creating all the necessary equipment for the RAAS platform, which applied the chacha20 stream cipher with RSA key management on modular ransomware, shadow copy deletion, option for specific file targeting and ability to encry the network shares.

On the theft front, ransomware reflectives are loaded via DLL injection and features Syscall Avenocation Technology, API hooking bypass, string obfusation and anti-debag.

Anthropic states that the danger actor rely almost completely on the cloud to apply the most knowledge-mang bits of the RAAS platform, given that, without AI aid, without AI aid, they must have failed to produce a working ranges.

“The most striking discovery is the actor’s complete dependence on AI to develop functional malware,” the report said.

“This does not appear to be able to apply the encryption algorithm, anti-agalis technique, or Windows Internal Herfer without the assistance of the operator Claude.”

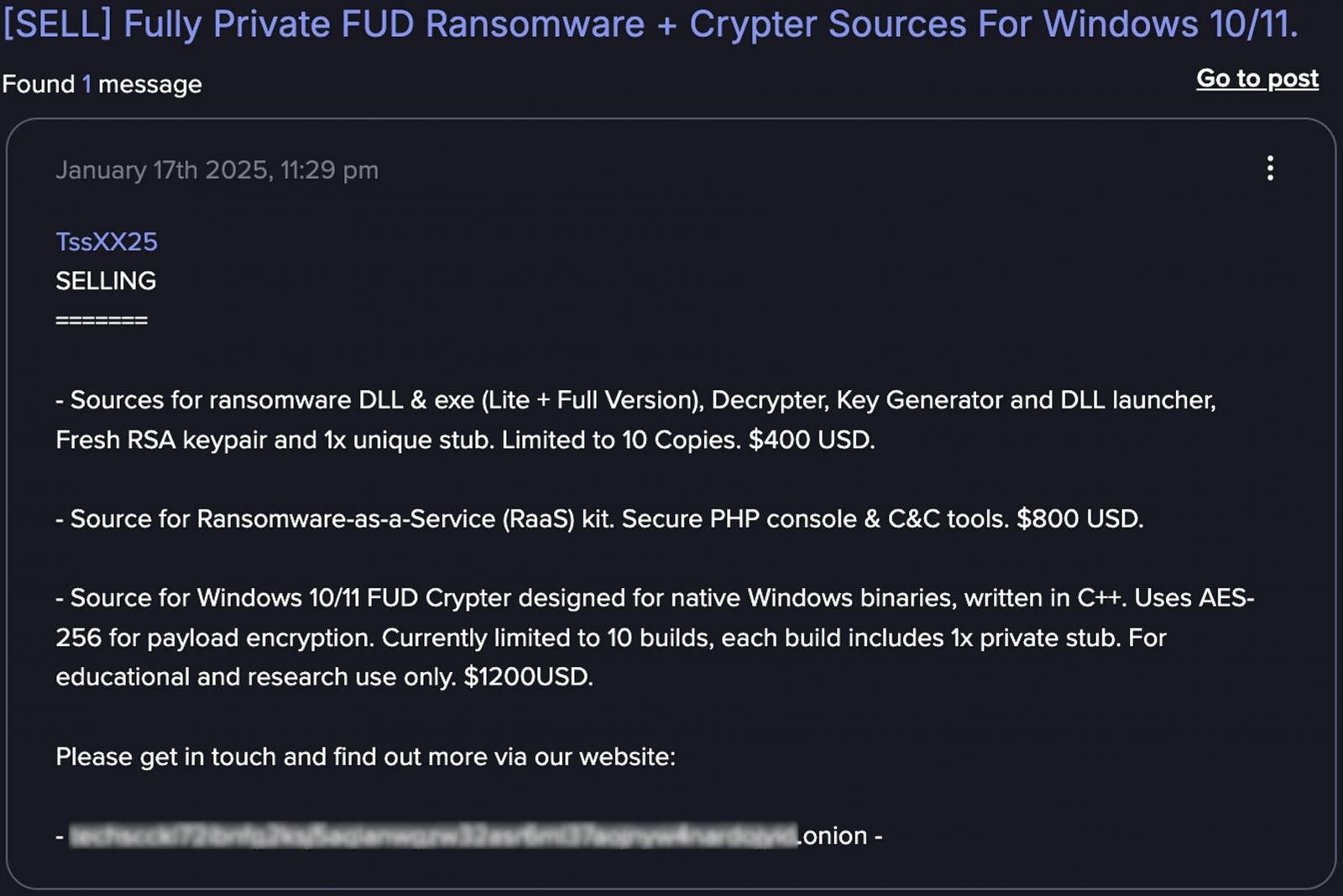

After creating the RAAS operation, the danger actor offered windows crapes for $ 400 to $ 1,200 on dark web forums like ransomware executable, PHP console and command-control (C2) with infrastructure, and dark web forums such as Dark Web forums such as Drade, Cryptb, and Nulled.

Source: Anthropic

Forced recovery campaign operated

In one of the analyzed cases, which tracks anthropic track as ‘GTG -2002’, a cyber criminal used Cloud as an active operator to run a data extortion campaign against at least 17 organizations in government, healthcare, financial and emergency services sectors.

The AI agent demonstrated the network reconnaissance and helped the danger actor achieve the initial access, and then produced custom malware based on the chisel tunnel tool to use for sensitive data exfoliation.

After the attack failed, the cloud code was used to improve the malware by providing string encryption, anti-decabusing code, and technology for file name muscleing.

Cloud was later used to analyze stolen files to set ransom demands, which was between $ 75,000 and $ 500,000, and even to produce custom HTML ransom notes for each victim.

“Cloud not only conducted ‘on -keyboard’, but also analyzed exfiltrated financial data to determine the amount of proper ransom and generated visually dangerous HTML ransom notes that were displayed by embedding them in boot process on afflicted machines” – – – – – – – – – – – – anthropic,

Anthropic called the attack an example of “vibe hacking”, which reflects the use of AI coding agents in cyber crime rather than employing them outside the context of operation.

Anthropic reports include additional examples where the cloud code was placed for illegal use, though in less complex operations. The company says its LLM assisted a danger actor in developing advanced API integration and flexibility mechanism for a carding service.

Another cyber criminal took advantage of AI Power for romance scams, replied to “high emotional intelligence”, replied Improve the profiles to target the victims, and to provide multi-language assistance for developing manipulation manipulation material as well as extensive targeting.

For each presented cases, the AI developer provides strategy and technology that may help other researchers to highlight the new cyber criminal activity or to form a related illegal operation.

Anthropic has banned all the accounts associated with the malicious tasks he has found, constructed a tilated classifier to detect suspected use pattern, and there are shared technical indicators with external partners to help protect against these cases of misuse of AI.