Snapchat has been experimenting with the AI-managed reality lens generated in its app for the last few years. Now, the company is allowing users to create their own with a new Standalone app To create AR effects.

Snap is offering a new version of its lens studio software that allows anyone to create AR lens through the text prompt and other simple editing tools, and publishes them directly on Snapchat. So far, the lens studio is available only as a desktop app for developers and AR professionals. And while the new iOS app and web version is not almost as powerful, it offers a wide range of thanks to the generic AI and a wide range of body-shaped effects.

The company states in a blog post, “These are practical new tools that make, publish and play more than before with the Snapchat lens you have created.” “Now, you can produce your own AI effects, add your dance bitmoji to fun, and express yourself with lens that reflects your mood or inner joke – whether you are near your computer or near your computer.”

Snap took me a initial look at the Lens Studio iOS app, and I wondered how flexibility it is. Detailed text signs are AI-powered equipment to change your face, body and backgrounds (the app also provides suggestions for types that work well, such as “wide zombie head with big eyes and nose, a lot of details.”

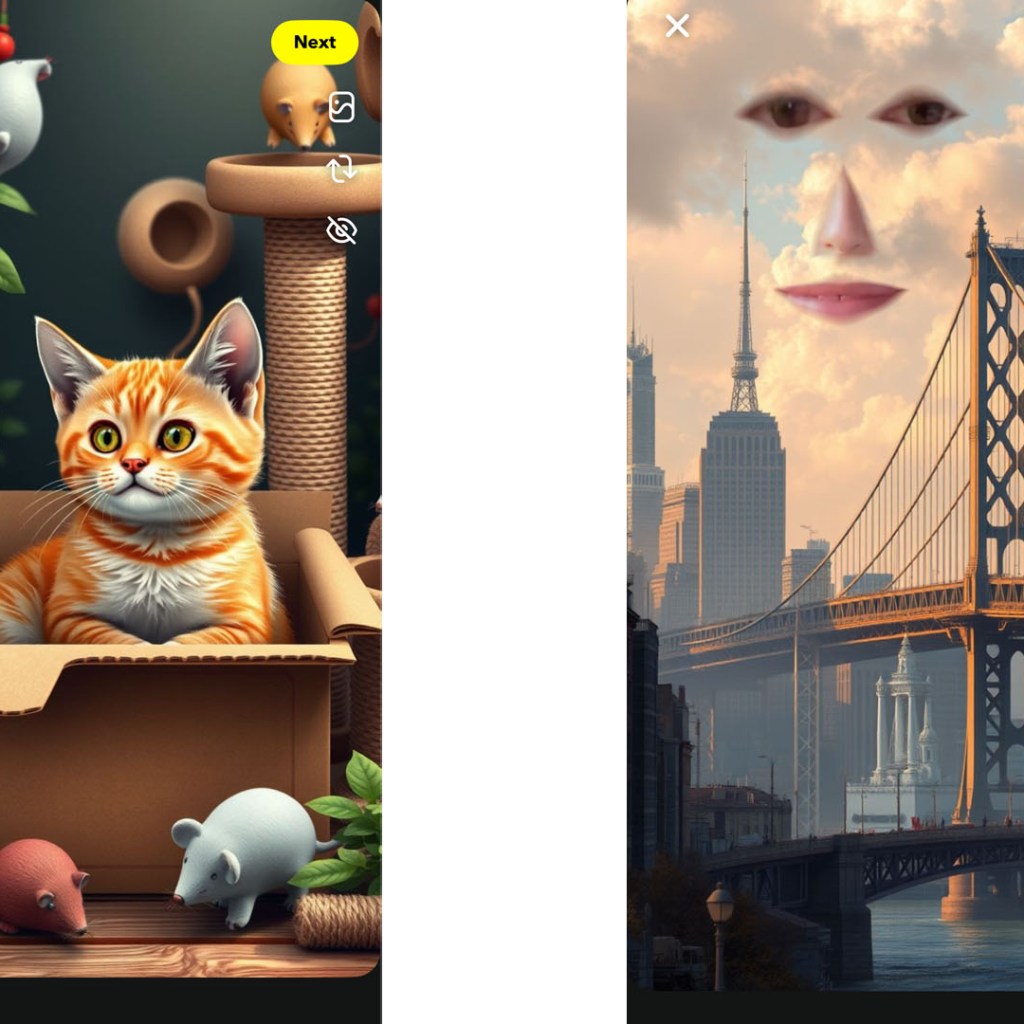

To find out that each type of effect is a learning state to work well, and some generative AI signals may take up to 20 minutes to render. But the app also offers dozens of templates that you can use as an early point and remix with your own ideas. You can also create simple face-altering filters that do not rely as heavy AIs, but take advantage of popular Snapchat effects such as face cutouts or bitmoji animations. (Some examples of my compositions are below, both have used AI so that I can create a background, on which I have more impact on other effects.)

Snap already has hundreds of lenses creators, some of which have been affecting the app for the years. But I can easily open this new, simple version of Lens Studio for many more. There may also be some upside down for creators expecting Snapchat’s mudification programs: The company confirmed that users publishing lenses from the new app will be eligible to participate. Lens manufacturer award Program, which pays the creators who create popular AR effects.

A more accessible version of the lens studio can also help compete with the meta for AR talent. (Meta shut down Spark R, its platform that allowed creators to create AR for Instagram last year.) In addition to Snapchat’s in-app effects, the company is now on its second generation standalone AR glasses. Recently, SNAP has focused on large-nam developers to create glasses-taiyar effects, but the company has previously bowed to the lens creators to come up with interesting use cases for AR glasses. Those types of integrations will possibly require much more than being available in the new para-down version of the lens studio, but one day may be possible for the company to make AR construction more accessible (with the help of AI).

Jim Lanzon, CEO of Yahoo, the original company of Engadget, joined the board of directors at SNAP on September 12, 2024. Outside the editorial team of Engadget, no one said in our coverage of the company.

This article originally appeared on Engadget